65% of Canadian hospitals had already implemented AI-driven predictive analytics in their EHRs in 2024, up from 42% in 2023, according to a CIHI survey cited by Menlo Ventures’ 2025 healthcare AI overview. For large hospitals in Ontario and British Columbia, adoption reached 92% in facilities with more than 400 beds. That changes the conversation for a mid-sized hospital CIO.

This isn’t about whether AI belongs in your platform. It’s about where it belongs, how it connects to existing clinical systems, and how to deploy it without creating a compliance problem, a brittle architecture, or clinician pushback.

Most articles on AI integration in healthtech platforms still default to U.S. assumptions. They talk about HIPAA, broad cloud patterns, and generic vendor checklists. Canadian teams need different advice. PIPEDA, PHIPA, provincial procurement realities, data residency expectations, and fragmented legacy estates all shape what’s viable here. Hospital leaders also have access to a maturing domestic ecosystem, as discussed in Cleffex’s perspective on AI adoption in Canadian enterprises, which makes practical, phased adoption more realistic than it was even a year ago.

The Inevitable Shift of AI in Canadian Healthcare

Canadian hospitals have already crossed the point where AI can be treated as a side initiative. Adoption figures cited earlier make that clear. For a mid-sized hospital CIO, the question is no longer whether AI will touch core systems. The question is whether your current platform strategy can support it without creating security, compliance, and interoperability problems that are expensive to fix later.

I see the same pattern in hospital modernisation programs across Canada. Teams upgrade patient access, referrals, documentation, and analytics as separate projects, then discover their architecture cannot support model inference, audit trails, or governed data exchange across those workflows. The result is predictable. AI pilots look promising in isolation, but they stall when legal, privacy, clinical leadership, and infrastructure teams ask where data is processed, which system remains the source of truth, and how a recommendation is reviewed before it affects care.

That constraint is sharper in Canada than in the U.S. Generic health AI guidance still defaults to HIPAA, broad public cloud assumptions, and national-scale vendor operating models. Canadian providers have to address PIPEDA, PHIPA, provincial privacy expectations, data sovereignty requirements, and procurement rules that vary by health authority. A technically capable product can still be the wrong fit if it stores prompts or derived outputs outside Canada, makes audit review difficult, or cannot map cleanly into existing Epic, MEDITECH, Cerner, or provincial repository workflows.

This is why architecture choices made during routine platform work matter more than the AI model itself.

A sound strategy starts with a narrow operational or clinical problem and tests whether the surrounding systems can carry it in production. In practice, that means checking four things early:

Workflow fit: The model output has to appear inside the system clinicians or operations staff already use, not in a separate dashboard that gets ignored.

Data readiness: HL7, FHIR, imaging, scheduling, and identity data need consistent mappings and usable metadata before model performance becomes meaningful.

Human accountability: Triage support, summarisation, and documentation tools need clear review steps, role-based access, and traceable author attribution.

Canadian compliance fit: Procurement and architecture review should confirm residency, retention, consent handling, logging, and third-party processing terms before a pilot starts.

Hospitals that get traction are usually the ones that treat AI as a governed platform capability, not a feature added at the edge. That is also where a broader Canadian enterprise view helps. Teams planning multi-year modernisation can apply patterns already proven in adjacent regulated sectors, which Cleffex discusses in its guide to AI adoption across Canadian enterprises.

There is also a practical vendor screening issue here. Digital mental health, virtual triage, and patient engagement tools are often the first places leaders test AI because implementation risk is lower than in diagnostic workflows. Even then, due diligence matters. If your team is evaluating conversational care platforms, start by reviewing the product category and deployment implications before procurement. For example, you can discover Woebot Health via Flaex.ai and use that review as an input into your own privacy, integration, and clinical governance assessment.

The hospitals making progress with AI integration in healthtech platforms are not always the ones with the largest innovation budgets. They are the ones who make early decisions about integration standards, data control, review workflows, and Canadian compliance, then build from there.

Key AI Use Cases Transforming Patient Care and Operations

Canadian buyers are already placing real budgets behind AI. CAD 12.4 billion was invested in Canadian digital health in 2024, with 68% (CAD 8.4 billion) directed to AI-integrated healthtech platforms, and early adopters reported a 3.2x ROI within 18 months, according to Strativera’s Canadian healthcare AI transformation analysis. For a mid-sized hospital, the practical question is not whether AI has promising use cases. Which ones can improve throughput, clinician time, and patient access without creating new compliance or integration debt under PIPEDA, PHIPA, and provincial data residency rules?

Clinical Documentation That Gives Time Back

Ambient documentation is one of the fastest paths to visible value. Hospitals adopt it because physician burnout is expensive, transcription workflows are slow, and documentation delays affect coding, discharge, and follow-up.

The technical bar is higher than many vendors suggest.

An ambient tool has to identify the right patient and encounter, generate a draft note with source traceability, preserve author attribution, and return the output into the chart in a format the EHR can use. If it produces free text that sits outside normal review steps, clinicians still have to rework the note, and the expected efficiency gain fades. In Canadian settings, this also raises direct questions about where raw audio, transcripts, and derived summaries are processed and stored.

The safer pattern is clinician-reviewed draft generation. Keep the AI output pending until the clinician accepts or edits it. That adds a step, but it protects chart integrity and gives compliance teams a clear point for audit and consent review.

Decision Support Tied to Workflow, Not Dashboards

Decision support can improve care when it appears at the moment a clinician can act on it. Sepsis risk, readmission flags, deterioration scoring, medication conflict detection, and imaging follow-up prompts are common examples. The hard part is not model accuracy in isolation. The hard part is delivering the recommendation with enough patient context, timing, and explainability that staff will use it.

In practice, effective decision support usually shares a few traits:

It pulls current data from the EHR, lab, ADT, pharmacy, and scheduling systems rather than relying on stale extracts.

It returns the recommendation inside the existing workflow.

It records what data informed the recommendation and what action the clinician took.

It supports local policy and escalation rules instead of forcing a generic vendor workflow onto the hospital.

That last point matters in Canada. A hospital in Ontario may need one escalation path for PHIPA-governed operations, while a multi-site system working across provinces may also need stricter controls around cross-border processing, vendor support access, and data retention. The integration approach should reflect those operating constraints. Teams planning these workflows usually benefit from established enterprise application architecture patterns for regulated systems before they start connecting models to production care pathways.

Administrative Automation With Measurable Operational Return

Administrative AI often reaches production sooner because the risks are easier to bound and the outcomes are easier to measure. Referral intake, appointment routing, coding support, prior authorisation support, document classification, and patient messaging are common starting points.

These projects succeed when the hospital fixes the process around the model. If referrals arrive through fax, scanned PDFs, portal uploads, and email attachments, the first requirement is a controlled ingestion layer with OCR, classification, confidence scoring, and exception handling. Otherwise, staff still spend their day sorting edge cases while the AI handles only clean inputs.

I usually advise CIOs to start with one queue where baseline metrics already exist. Referral turnaround, no-show reduction, document backlog, and coding lag are better early targets than broad promises about enterprise transformation. They give finance, operations, and clinical leadership a shared view of value.

Digital Therapeutics and Patient Engagement

Patient-facing AI also has a place, especially in behavioural health, chronic care follow-up, and pre-visit support. The use case can be attractive because it improves access without touching the highest-acuity diagnostic decisions first.

Vendor review still needs discipline. If your team is assessing conversational care or digital mental health tools, use product research as one input, then run your own privacy, clinical safety, and integration assessment. For example, you can discover Woebot Health via Flaex.ai to understand how this category is positioned, then evaluate whether the product can meet your requirements for consent handling, record integration, Canadian hosting, and clinical oversight.

Imaging and Triage Support

Imaging and triage are high-volume environments where AI can help prioritise worklists and flag cases that need faster review. The business case is strongest where the queue is large, the service-level target is clear, and the handoff from image review to report and follow-up is already defined.

Poor integration breaks the value quickly. If PACS, RIS, reporting tools, and the patient record do not align on identifiers and status updates, staff end up reconciling exceptions manually. In that scenario, the model may still detect a relevant pattern, but the department does not get the operational gain it expected.

The best early use cases are the ones a hospital can measure before and after deployment. Time saved on charting. Referral backlog reduced. Faster imaging prioritisation. Fewer manual touches in intake. Those are the AI programmes that survive budget review and expand beyond pilot status.

Mapping Your Technical AI Integration Architecture

A hospital platform doesn’t become AI-enabled because it bought a model. It becomes AI-enabled because data, applications, and controls are arranged so the model can operate safely inside production workflows.

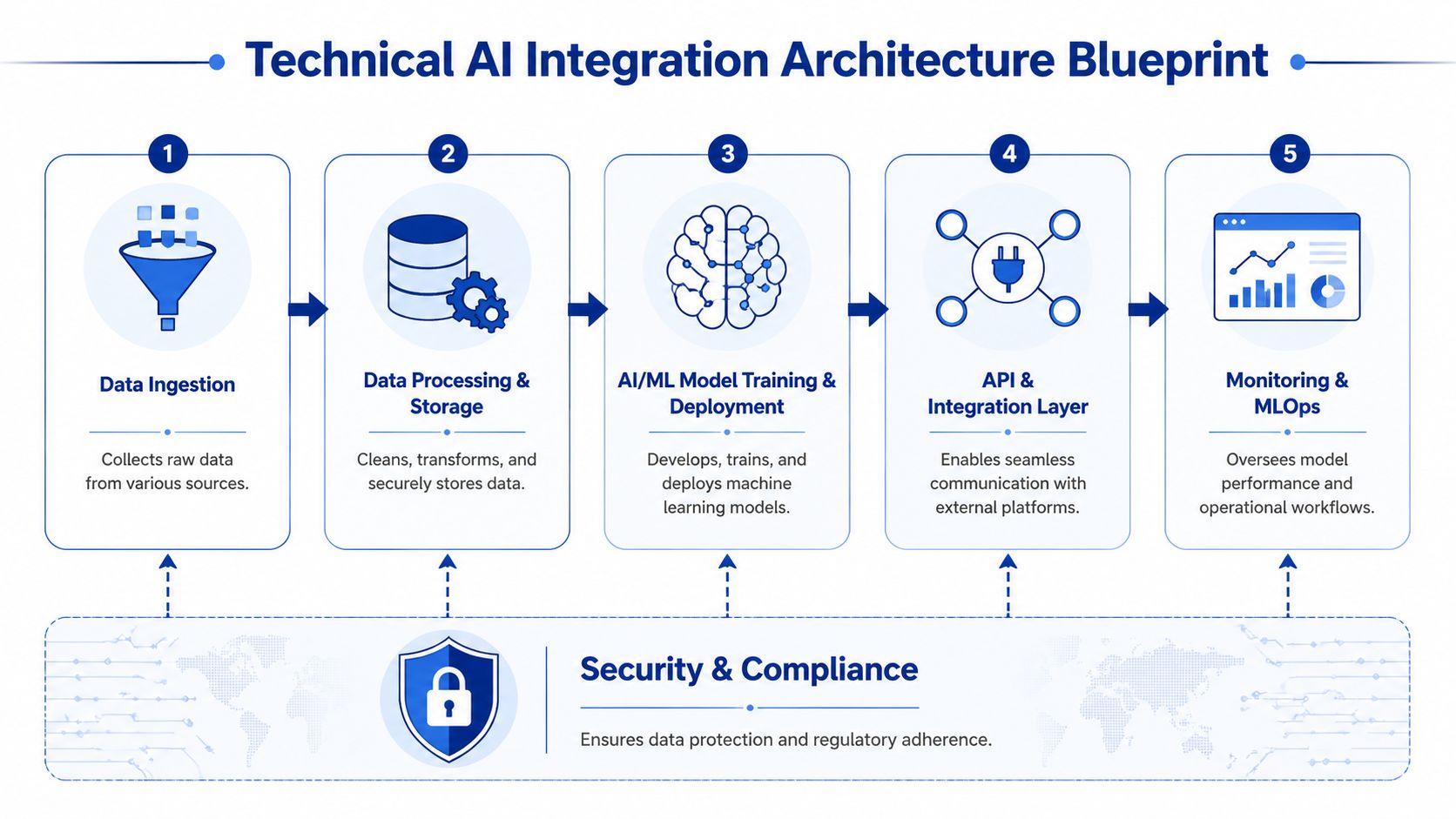

The most reliable reference architecture follows five building blocks. Data ingestion. Processing and storage. Model training and deployment. API and integration. Monitoring and MLOps. Security and compliance sit across all of them. If any one block is weak, the platform becomes difficult to scale or govern.

Start With the Interfaces Your Teams Already Know

Canadian healthcare teams don’t need exotic patterns first. They need stable standards. ML-based Clinical Decision Support Systems integrated through SMART on FHIR and CDS Hooks provide real-time patient data access for risk prediction, and FHIR-based apps reduce complexity for startups and clinics, a strategy that led 22% of Canadian healthcare organisations to implement domain-specific AI tools in 2025, according to Techno-soft’s review of AI-integrated healthcare systems.

That matters because FHIR gives you a cleaner way to access patient, encounter, observation, medication, and condition data than custom point-to-point interfaces in many scenarios. It won’t solve every legacy problem, but it gives architects a sane baseline.

Use the architecture as a sequence of decisions:

Ingestion Layer

Connect EHR, lab, imaging, scheduling, billing, portal, and device sources. Some data arrives through HL7 v2 feeds. Some come through FHIR APIs. Some still comes from flat files or scanned documents.Processing and Storage Layer

Normalise identifiers, validate message quality, map data into common structures, and separate raw from curated stores. At this stage, many projects either create future flexibility or hard-code future pain.Model Serving Layer

Host the model where security, latency, and governance make sense for the use case. Keep inference services separate from transaction systems when possible.Integration Layer

Push outputs back into the EHR, task queue, clinician inbox, or operations dashboard through auditable APIs.Monitoring Layer

Watch not only uptime, but drift, adoption, override rates, failed predictions, and workflow exceptions.

What Works in Real Deployments

A useful mental model is to think of the AI layer as an additional clinical service, not a magical brain sitting above the stack. It needs input contracts, version control, operational support, and rollback paths.

Teams that want a broader engineering view often benefit from reviewing AI software architecture best practices alongside established enterprise application architecture patterns. The combination is useful because health systems rarely have the luxury of rebuilding around a single clean architecture.

Architecture test: If you can’t swap one model for another without rewriting your core workflows, you haven’t built an AI integration layer. You’ve hard-wired a dependency.

Avoid These Design Mistakes Early

Some patterns fail repeatedly in hospital environments:

Model-first procurement: A vendor demo shows strong outputs, but no one has validated source system access, event timing, or write-back methods.

Shadow data pipelines: Innovation teams create parallel extracts outside core governance, which later become compliance and support problems.

Single dashboard syndrome: AI recommendations live in a separate portal that staff stop checking once operational pressure rises.

No fallback workflow: When the model or upstream feed fails, the service line has no graceful manual path.

One practical option for hospitals that need custom integration around existing systems is Cleffex Digital Ltd, which builds regulated healthcare software and AI-enabled workflow integrations in North American environments. That kind of partner model usually makes sense when off-the-shelf tools cover only part of the stack and the hospital still needs custom connectors, workflow orchestration, or compliance-aware application layers.

Navigating Data Governance and Canadian Compliance

Most AI projects in healthcare don’t fail because the model is weak. They fail because the data estate is inconsistent, poorly governed, or politically fragile. In Canada, that problem gets sharper because privacy obligations span federal and provincial layers, and operational leaders are rightly cautious about where patient data moves and who can inspect it.

Good governance is not a brake on AI integration in healthtech platforms. It’s what makes production use defensible.

Privacy by Design Is an Architecture Choice

The technical question isn’t just “is this vendor compliant?” The deeper question is whether your platform makes compliant behaviour the default. That means patient identity management, role-based access, data minimisation, audit trails, retention rules, and model input controls are built into the design rather than stapled on after procurement.

AI-driven integration platforms can help here. They achieve semantic consistency through automated data mapping and transformation, reducing manual configuration by up to 70%, while secure governance frameworks maintain compliance with PHIPA and PIPEDA. Benchmarks cited by BizData360’s healthcare data integration guide also show 99% accuracy in anomaly detection and de-duplication.

That combination matters more than it first appears. Better mapping reduces staff work. Better de-duplication reduces patient matching errors. Better auditability reduces organisational risk.

The Governance Controls That Actually Matter

A Canadian hospital AI programme should be explicit about a small set of controls before scale-up:

Data lineage: Track where each field came from, how it was transformed, and which model consumed it.

Purpose limitation: Keep a clear record of which data elements are used for documentation, triage, operations, or analytics.

Human review points: Define where clinicians or authorised staff confirm, reject, or amend AI-generated outputs.

Residency and sovereignty safeguards: Confirm where data is stored, processed, cached, and logged, including subcontractor exposure.

De-identification strategy: Separate identifiable, pseudonymised, and fully de-identified datasets by use case.

For teams sorting through the Canadian regulatory overlap, it can help to explore SOC2Auditors' Canadian compliance advice and compare that guidance with healthcare-specific implementation concerns, such as those covered in Cleffex’s article on AI in healthcare data privacy in Canada.

Strong governance shortens procurement cycles because legal, privacy, and clinical leadership can see how the system behaves before it touches production data.

What Doesn’t Work

Three governance shortcuts tend to create avoidable trouble.

First, hospitals sometimes assume a cloud provider’s baseline security posture answers all healthcare compliance questions. It doesn’t. Your configuration, workflows, data flows, and user access patterns still determine most of the actual risk.

Second, teams often over-centralise review. Every model update, mapping change, or prompt adjustment gets trapped in a slow committee process. Governance should be controlled, but it also needs operating procedures that allow safe iteration.

Third, some organisations try to govern AI outputs without governing source data quality. That never holds. If duplicate patients, stale observations, or inconsistent coding enter the pipeline, the compliance conversation quickly turns into a patient safety conversation.

The Critical Decision: Build Versus Buy

Every hospital reaches the same fork. Should you build AI capability in-house, buy a vendor platform, or combine both? The wrong answer usually comes from asking the wrong question. This isn’t really a technology preference exercise. It’s a decision about control, speed, internal capability, workflow uniqueness, and long-term support burden.

A buy-first instinct often makes sense for mature use cases like ambient documentation, coding assistance, or imaging support, where vendors already understand the workflow and regulatory requirements. A build-first strategy makes more sense when your differentiation lies in orchestration across local systems, custom triage logic, or a unique patient service model that packaged tools won’t fit cleanly.

Build vs. Buy Decision Matrix for Healthtech AI

| Factor | Build (In-House) | Buy (Vendor Solution) |

|---|---|---|

| Speed to deployment | Slower at the start because architecture, interfaces, and governance processes must be assembled internally | Faster for common use cases if the vendor already supports healthcare workflows |

| Workflow customisation | Strong fit for hospital-specific intake, referral, or escalation logic | Often limited to the vendor’s operating assumptions and roadmap |

| Control over data handling | Higher control if your team can govern hosting, access, logs, and model behaviour properly | Depends on contract terms, hosting model, subcontractors, and product constraints |

| Internal skill requirement | Requires product ownership, solution architecture, engineering, privacy input, and support capability | Reduces engineering load but still needs integration, security review, and vendor management |

| Interoperability flexibility | Better when legacy systems, bespoke APIs, and local workflow rules dominate | Better only if the vendor has proven connectors for your stack |

| Long-term maintenance | Ongoing model, infrastructure, and support ownership stays with the hospital or its build partner | Ongoing dependence on vendor pricing, roadmap, support quality, and product changes |

| Procurement risk | Delivery risk, if the scope is unclear or internal governance is weak | Lock-in risk if contract terms and extraction rights aren’t negotiated well |

| Best fit | Strategic capabilities that need tailoring and close alignment with local systems | Repeatable capabilities that are already well understood in the market |

A Practical Scorecard for CIOs

Use five questions to pressure-test the decision.

Is the Use Case Common or Institution-Specific?

If many Canadian providers solve the same problem in broadly similar ways, buying is usually more sensible. Ambient documentation is one example. If the use case depends on your hospital’s own referral rules, regional service network, or unusual system mix, building or heavily customising often wins.

Where Does Risk Sit?

Buying lowers product development risk but may increase contract and dependency risk. Building gives you more control but increases delivery and operating risk. Be honest about which kind of risk your organisation is equipped to manage.

Decision cue: Buy capabilities. Build differentiation.

Can the Vendor Meet Your Integration Reality?

Many products claim FHIR support. Far fewer handle mixed estates well. A mid-sized hospital often runs a combination of legacy interfaces, custom exports, document workflows, and fragmented identity matching. If the vendor’s integration assumptions don’t fit your environment, the “fast” option may become the slower one.

Who Owns the Operating Model After Go-Live?

This question gets skipped too often. Someone still has to handle incident response, model oversight, clinician feedback, release management, and audit support. If no one owns that after launch, both build and buy strategies fail.

The Hybrid Model Is Often the Most Practical

In Canadian health systems, the best answer is often hybrid. Buy the specialist capability. Build the integration and governance layer around it. That approach preserves speed where the market is mature and control where local constraints matter most.

It also aligns with how hospitals evolve. Few have the appetite to replace major systems outright. Most need to improve them, connect them, and wrap them with safer intelligence.

A Practical Roadmap for Phased AI Implementation

Canadian hospitals do not struggle with AI because the use cases are unclear. They struggle because many pilots never make it through privacy review, integration work, procurement, and operational handoff. A phased plan addresses that reality better than a broad innovation program.

For a mid-sized hospital, the first step is to choose a use case with three traits. The data is already available in systems you control. The clinical risk is low enough to support supervised rollout. The operational gain is visible within one budget cycle. Documentation support, referral triage, scheduling optimisation, and document classification usually meet that test better than diagnosis support or autonomous decision tools.

Set the operating boundary before any model evaluation starts:

What the AI can do

What requires human review

Which systems are approved as source data

Where the output appears in the workflow

Which metrics determine whether the pilot continues

That discipline matters in the Canadian context. If personal health information is involved, privacy and security teams need to assess PHIPA or PIPEDA obligations early, along with data residency, vendor subprocessors, and whether any inference or logging data leaves Canada. Those questions are easier to answer when the scope is tight.

The second phase is workflow proof, not model theatre. Accuracy scores matter, but they are rarely the reason a hospital keeps or kills an AI tool. The practical questions are different. Does the output arrive at the right point in the clinician's day? Can the staff correct it quickly? Can managers review exceptions without creating another manual queue? If a tool saves time in a test environment but adds friction in charting, referral handling, or audit prep, adoption drops fast.

I usually advise hospitals to assign one accountable process owner at this stage. Not a general sponsor. Not a project observer. A named leader who owns the workflow after the implementation team steps back.

Production hardening comes next. At this stage, many promising pilots stall. The model may work, but the hospital still lacks support procedures, access controls, log retention rules, rollback steps, release approvals, and a clear record of who can change prompts, thresholds, or business rules. If the system writes into an EHR, LIS, RIS, or scheduling platform, the authoring model and audit trail need to be explicit. If an external model provider is involved, legal and privacy teams need a documented data flow, retention terms, and breach obligations that fit Canadian requirements.

Training also needs to cover failure modes. Staff should know when to trust the output, when to override it, and how to report bad results. That is what turns a pilot into an operating service.

The final phase is the selective scale. Reuse the same integration pattern, governance checklist, and approval path for adjacent workflows instead of launching a dozen unrelated pilots. Hospitals get better results by repeating one proven method across multiple departments than by chasing every new AI feature at once.

That is how AI integration in healthtech platforms becomes sustainable. It becomes a managed hospital capability, grounded in local compliance, data sovereignty, and workflows that clinical and operational teams will keep using.

If your hospital is weighing where to start, Cleffex Digital Ltd can help scope a compliant AI integration path around your existing systems, whether that means a focused pilot, a custom interoperability layer, or a broader platform modernisation plan built for Canadian healthcare requirements.