The most popular advice on healthtech MVP development is still wrong for Canada. It says to move fast, keep scope lean, and sort compliance once users prove interest. That approach might work for a generic SaaS tool. In Canadian healthcare, it creates expensive rework, weak adoption, and avoidable pilot failures.

A usable MVP in this market starts with two realities. First, provincial compliance rules shape the product from day one, not after launch. Second, clinicians won't adopt software just because it looks modern. They adopt it when it fits the way care is delivered in a clinic, hospital unit, or insurer workflow.

I've seen teams waste months building features they thought were essential, only to learn the actual barrier was a consent flow, an integration assumption, or a nurse workflow they never validated. The teams that move cleanly through the MVP stage do less. They define one clinical problem, map the province-specific rules around that problem, and build only what they can test safely in a real setting.

Defining Your Clinical Problem and MVP Scope

Failed healthtech MVPs typically fail because they target a hypothetical pain point instead of a recurring clinical bottleneck.

In Canada, the scope definition starts earlier than many founders expect. Before a team writes user stories, it needs to know which workflow it is improving, who handles personal health information in that workflow, and which province sets the operating rules. A scheduling tool for an Ontario clinic, a remote monitoring pilot in Alberta, and an insurer-facing care coordination product in BC can all look similar in Figma and still require different decisions on consent, access, auditability, and data handling. Teams that treat scope and compliance as separate workstreams usually pay for that split twice.

Start With a Workflow, Not a Feature List

A useful scoping question is simple. Where do care delivery or clinic operations break down today?

The answer is rarely “we need AI” or “we need a patient app.” It is usually narrower and more expensive than that. Referral intake sits in a fax queue for days. Front-desk staff enter the same demographics into two systems. Nurses spend time chasing unsigned forms before visits. Specialists open incomplete charts and start appointments late.

Pick one workflow you can improve from start to finish. Good first-scope examples include appointment booking attached to an eReferral path, digital intake that feeds an existing admin process, or medication reconciliation with read-only access to a source system. Each is narrow enough to pilot and broad enough to show operational value.

This is also the point where compliance changes scope. If the target workflow involves substitute decision-makers, minors, cross-site access, or information sharing between custodians, your MVP may need consent logic and role controls earlier than a founder expects. That is product scope, not legal cleanup. Teams planning regulated products should account for that from the outset, especially if they are budgeting around healthcare compliance software development requirements in Canada.

Run Interviews That Surface Operational Truth

Generic discovery calls do not give enough signal in healthcare. Interview the people doing the work, the person approving the budget, and the person who gets blamed when the workflow breaks.

Ask each participant to walk through the last real case, step by step. Look for handoffs, duplicate entries, delays caused by missing information, and the manual fixes staff use to keep the day moving. Those workarounds often define the MVP better than any feature request.

I usually want evidence from clinicians, admin staff, and at least one operational owner before I trust a scope. If you are building for physician workflows, load matters. WeekdayDoc's burnout statistics are a useful reminder that physicians are already absorbing a heavy administrative burden. If your MVP adds clicks, inbox noise, or training overhead, adoption drops fast, even when the feature set looks strong in a demo.

Practical rule: If a clinician cannot explain the value in one sentence tied to one task, the MVP still has too much in it.

Cut Scope Until the Value Is Obvious

Founders often keep edge-case features because they are worried the product will look incomplete. In clinical software, incompleteness is acceptable. Unclear is not.

Use a hard filter before anything enters the sprint planning:

Single-workflow test: If the feature does not improve the target workflow directly, cut it.

Pilot safety requirement: Keep only the minimum permissions, audit trail, and admin controls needed to test the product responsibly.

Clinical adoption test: If staff need significant retraining to use the feature, push it to a later phase unless it removes a major point of friction.

Integration realism: If an integration is helpful but not necessary for the first pilot, use a manual or read-only bridge first.

A strong MVP can look modest on paper. That is usually a good sign. In Canadian healthtech, the better early products are often the ones that fit one clinic process, satisfy the relevant provincial constraints, and give a care team a reason to keep using them after the pilot ends.

Navigating the Canadian Compliance Maze

Founders often treat compliance as a review step after the MVP is built. In Canadian healthtech, that mistake changes the product itself.

A team can scope a clean pilot, build quickly, and still get stuck because the app assumes the wrong custodian model, stores data in the wrong jurisdiction, or handles consent like a consumer wellness tool instead of a clinical system. By the time those issues surface, the fix is rarely a policy update. It is usually product rework.

The first hard call is simple. Which province are you building the pilot for?

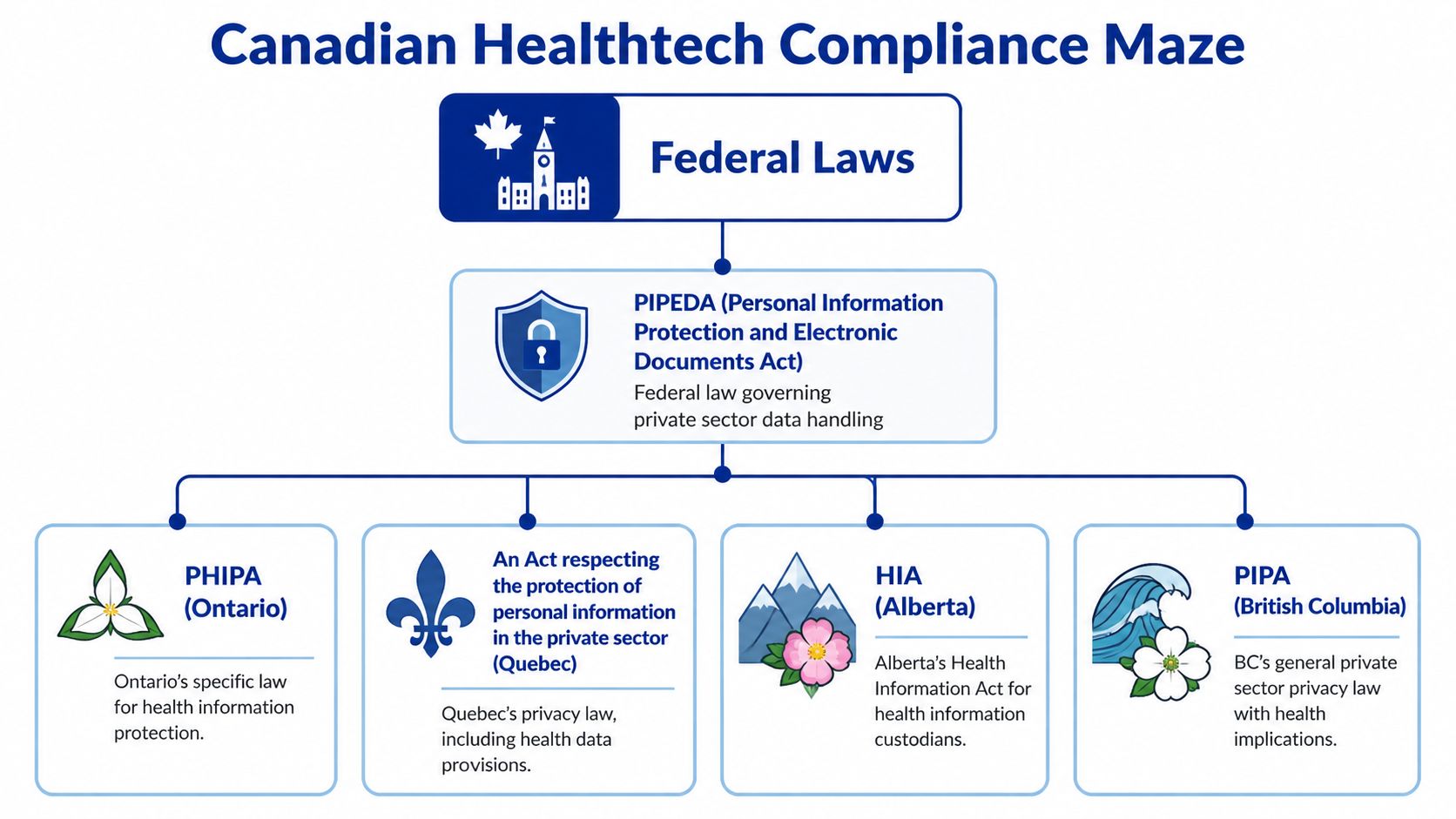

PIPEDA may still matter, especially for commercial activity, but health data rules are often driven by provincial statutes and by how care delivery is organised locally. Ontario brings PHIPA into the centre of the conversation. Alberta teams need to account for HIA. In British Columbia, PIPA often catches companies that assume they are outside the stricter part of health privacy because they are not a hospital or EMR vendor. Quebec raises the bar again with tighter expectations around privacy governance, language, and documentation.

Those differences shape product scope early. A messaging feature may look small in a roadmap review, but if it creates part of the clinical record, triggers retention duties, or changes who can disclose information to whom, it stops being a lightweight add-on.

I push teams to answer four questions before design gets too far ahead:

Who is acting as the health information custodian, trustee, or comparable accountable party in practice

Where data is stored, processed, and backed up

What consent and disclosure model fits the clinical workflow

How access is limited, approved, and logged

If a team cannot answer those clearly, the backlog is ahead of the strategy.

Start the Privacy Review in Discovery

A privacy impact assessment should influence feature decisions, not just procurement paperwork.

Done early, it forces useful decisions while they are still cheap. Which data fields are needed for the pilot? Which user roles require access on day one? Whether a vendor introduces cross-border processing risk. Whether a clinician-facing note, alert, or attachment becomes part of the formal record. Those are product questions with legal consequences.

For teams translating policy requirements into engineering work, this guide to healthcare compliance software development is a practical reference.

Common Mistakes That Create Expensive Rework

The pattern is familiar across both startup and enterprise pilots.

Federal-only scoping: Teams map requirements to PIPEDA and miss the provincial rules that drive actual approval.

Consumer-style consent flows: Clinical use cases often need more precise handling of purpose, disclosure, substitute decision-makers, and staff access.

Loose data residency assumptions: A hosting choice that looks harmless in sprint planning can stall security review or procurement.

Late audit logging: If the system cannot show who accessed what and when, remediation usually touches architecture, not just reporting.

No clinical owner on compliance decisions: Legal and technical teams can interpret obligations differently unless a care delivery stakeholder defines how the workflow really works.

The practical path is narrower than many founders expect. Pick the pilot province first. Define the clinical setting second. Then build the MVP around the privacy, access, and governance model that fits that environment. Teams that do this well usually ship fewer features, but they reach live use with less rework and far better odds of clinical adoption.

Architecting for Security and Interoperability

Teams often treat architecture as a technical layer they can clean up after product-market fit. In Canadian healthtech, that approach creates delays fast. The first architecture choices shape privacy review, integration effort, procurement risk, and whether clinicians can use the product inside a real workflow.

For Canadian healthtech MVPs, our practice usually starts with a simple baseline: React Native or Flutter for the app layer, Node.js or Go for backend services, a relational database with encryption and audit support, and FHIR R4 as the default interoperability model. Hosting in Azure Canada Central is often the practical choice when clients need Canadian data residency and want a vendor security posture that procurement teams already recognise. None of these choices is fashionable. They reduce approval friction.

Choose Boring Infrastructure Where Review Risk Is Highest

Early architecture should optimise for three things: traceability, controllable access, and integration tolerance.

That usually means avoiding clever infrastructure patterns that save a few weeks now but create long security questionnaires later. A single codebase on mobile can keep costs contained. A backend with clear service boundaries makes it easier to isolate PHI handling, apply logging consistently, and limit blast radius if requirements change. For startup teams, this is often the difference between a pilot that gets approved and one that gets stuck in review.

I have seen teams lose months because they picked tools their engineers liked, but their hospital buyer could not assess quickly. In a consumer app, that might be survivable. In a Canadian clinical setting, it affects contract timing and pilot scope.

Interoperability Has To Match the Pilot Environment

A polished standalone app proves very little if the pilot depends on an EMR, scheduling system, lab feed, or referral workflow. Clinical staff will judge the MVP by the extra steps it creates or removes. If staff must re-enter data into Accuro, OSCAR, TELUS PS Suite, or a hospital system, adoption drops fast.

The safer pattern is to design for constrained integration from day one. Start with the smallest connection that supports the clinical use case. Read-only demographics, appointments, or selected chart data are often enough for an MVP. That gives the team a way to validate workflow value without taking on full write-back complexity, reconciliation logic, or the governance overhead that comes with changing the source of record.

FHIR should be the default target, but teams still need to plan for HL7 v2 realities, custom exports, and vendor-specific APIs. A practical overview of how FHIR integration transforms healthcare is useful here, especially for teams deciding what to normalise now versus what to map later.

The same caution applies to AI features. If the MVP includes any decision-support layer, the architecture has to preserve traceability, source data quality, and clinician review paths. Work like evaluating AI for ECG interpretation is a good reminder that model output does not remove the need for reliable system design around it.

Build the Controls You Will Be Asked To Prove

Security controls belong in version one because the pilot environment will test them early.

For a Canadian clinical MVP, that usually includes:

Encryption at rest and in transit: Use current, standard encryption methods across storage, backups, and data transfer.

Role-based access control: Map permissions to actual clinical and operational roles, not generic user types.

Audit logging: Record access to PHI, privilege changes, exports, and clinically meaningful actions from the start.

Environment separation: Keep development, staging, and production isolated, with strict rules around test data.

PHI boundary control: Isolate services that handle personal health information so later features do not spread risk across the stack.

The trade-off is straightforward. Adding these controls early increases initial delivery effort. Leaving them out usually costs more because remediation touches architecture, test plans, vendor review, and client trust all at once.

Good healthtech MVP architecture feels conservative because it is built for scrutiny. In Canada, that is not overengineering. It is what gives an MVP a realistic path from pilot to live use.

Prototyping and Validating With Clinical Users

Clinical UX falls apart in places a normal app team doesn't test. A nurse starts a task, gets interrupted, returns halfway through, notices a missing field, and needs to finish quickly without wondering whether the system saved anything. That's the environment your MVP has to survive.

A polished prototype can still fail because it assumes continuous attention. Clinical users rarely have that luxury. Their tools need to be obvious, forgiving, and fast under interruption.

Test the Prototype Where Work Actually Happens

The most useful usability sessions don't happen in a quiet boardroom. They happen in the context of real work, or as close to it as you can safely get.

Ask a clinician to complete one meaningful task in the prototype. Then watch where they hesitate. Watch what they skip. Watch whether they trust the labels, whether they understand what is editable, and whether they can recover after an interruption without redoing the whole flow.

At this stage, human-centred design stops being a slogan and becomes a delivery method. Practical guidance, like designing for people with a human-centred design process, is especially relevant in health products because the user is rarely sitting calmly with full attention.

Design for Cognitive Load, Not Aesthetics

Healthcare teams often ask for a “clean UI”. That matters, but clean isn't enough. The interface must reduce cognitive load in moments where users are juggling risk, urgency, and competing demands.

A few patterns consistently work better than flashy experiences:

Persistent context: Keep patient or case context visible so users don't lose their place.

Clear next action: One primary action per screen beats several equal-weight buttons.

Safe recovery: Autosave, confirmations only where needed, and obvious status feedback reduce anxiety.

Minimal free-text burden: Structured inputs often outperform open fields in busy settings.

A useful caution comes from an adjacent clinical AI evaluation. Articles like Qaly's review of evaluating AI for ECG interpretation are worth reading because they show how quickly impressive-looking outputs can miss what matters in clinical use.

When a nurse asks, “What happens if I get pulled away here?”, they're not talking about UX preference. They're testing whether your product understands their reality.

Use Feedback To Cut, Not Just Add

After the first few usability sessions, teams often generate a long backlog of requested enhancements. That's the wrong instinct.

The best signal from testing is often what to remove, merge, or simplify. If three users misunderstand a step, don't add helper text and move on. Rework the flow. If a clinician ignores a dashboard widget, that's often a scope clue, not a training problem.

Launch Strategy, Metrics, and Iteration

Broad launch is usually a mistake for a Canadian healthtech MVP. The smarter move is a controlled pilot in one setting, with one workflow, under one privacy and operational model.

That sounds conservative. It is. In healthcare, restraint at launch usually produces better evidence, fewer compliance surprises, and stronger clinical references.

What To Measure in a Pilot

Early pilots fail when teams report interest instead of behaviour. Downloads, logins, and positive demo feedback can all look healthy while the product is still slowing staff down.

Measure the workflow you set out to improve. For example: how long the target task takes, whether users complete it without workaround steps, how often they return without reminders, and whether supervisors or clinic leads want the product kept in place after the pilot period. In Canadian deployments, I also want to see whether the product creates extra privacy steps for staff, whether audit logs are usable, and whether consent or role rules cause delays in real use.

A good pilot scorecard usually covers four areas:

Adoption: Active use by the intended role, repeat use, and drop-off after the first two weeks

Workflow performance: Time to complete the task, handoff delays, and error or rework rates

Trust: Override behaviour, escalation frequency, and qualitative feedback from clinicians and administrators

Compliance operations: Access issues, audit trail quality, privacy incident risk, and whether local policy assumptions held up in practice

The exact thresholds depend on the setting. A family health team, private clinic, and hospital department will tolerate different levels of friction. What matters is setting the threshold before launch, with the pilot site, so nobody redefines success after the fact.

Introduce AI Only When Governance Is Visible

AI can belong in an MVP. I have seen it work in intake routing, documentation support, claims review, and administrative triage. I have also seen solid products stall because the team treated model governance as a phase-two problem.

In Canada, that is an expensive assumption. Provincial privacy obligations still apply, and AI adds questions about traceability, human review, model drift, and procurement scrutiny. Even where the law is still evolving, buyers already ask practical questions: what data trained the model, what output can be challenged, who reviews edge cases, and how the system behaves when confidence is low.

If AI is in scope for launch, the pilot should make those controls visible from day one. Include output labelling, clear escalation paths, usage logging, version tracking, and an explicit statement of what the model does not decide. Clinical teams adopt AI faster when they can see its limits. Privacy and procurement teams approve it faster when they can see the controls.

A strong pilot shows more than usage. It shows that the product can expand without creating new clinical risk or provincial compliance problems.

Iterate With an Evidence Chain

Post-launch iteration should answer a small set of hard questions, not chase every request that comes in.

Did the product improve the target workflow enough to justify broader rollout?

If adoption slowed, was the blocker usability, local process fit, training, or trust?

Did the release add privacy, security, or operational work for the site?

That evidence chain matters in Canada because scale usually depends on more than user enthusiasm. Privacy officers, clinical sponsors, procurement, and IT all need a reason to keep saying yes. Teams that document what changed, what improved, what failed, and what was fixed have a much easier time expanding from one pilot site to a second. Teams that launch widely, measure loosely, and promise future governance usually get stuck in pilot limbo.

Your Healthtech MVP Rollout and Cost Checklist

The cheapest healthtech MVP on paper often becomes the most expensive one in practice.

I see this pattern in Canadian projects all the time. A team budgets for product design and engineering, then runs into the work that ultimately decides whether a pilot can launch: privacy review, data flow decisions, security controls, clinical onboarding, procurement questions, and support during the first weeks of use. In Canada, those are core delivery costs, not overhead. PHIPA, provincial data handling expectations, and site-specific clinical processes shape rollout from the start.

Use this checklist before vendor selection and before sprint planning. It helps expose whether the proposed MVP is really launchable in a clinic, not just demo-ready.

Healthtech MVP Timeline and Budget Checklist

| Phase | Key Activities | Estimated Timeline | Key Budget Items |

|---|---|---|---|

| Discovery | Interview clinicians, admin staff, and patients. Map one workflow. Define success metrics and province-specific compliance assumptions. | 2 to 4 weeks based on typical project timelines we've observed | Product discovery, workflow research, privacy consultation, stakeholder workshops |

| Compliance planning | Confirm applicable provincial law, data flows, consent model, user roles, audit requirements, and cloud residency approach. | Runs in parallel with discovery | Legal review, privacy impact assessment work, policy drafting, and records mapping |

| Design and technical specification | Create clickable prototypes, define data model, choose stack, plan interoperability, and document access controls. | Usually overlaps with early discovery and compliance work | UX design, architecture planning, prototype tooling, solution design |

| MVP development | Build the smallest secure product with core workflow support, authentication, logging, and required integrations. Keep scope tight and centred on the main workflow. | 8 to 12 weeks for many first-release builds we've delivered or reviewed | Engineering, QA, secure cloud setup, API work, and project management |

| Beta pilot | Deploy to a small clinic or contained department, train users, monitor completion, and collect structured feedback. | Often 3 to 6 weeks, depending on site readiness and support needs | Pilot support, onboarding materials, analytics tooling, issue triage, security monitoring |

| Iteration and go-forward decision | Fix UX friction, refine workflows, plan scale-up, or stop if the evidence is weak. | Immediate post-pilot cycle | Additional development, usability retesting, stakeholder reporting, roadmap planning |

These timelines are planning ranges, not guarantees. A single integration issue, a privacy review delay, or a local procurement requirement can add weeks.

Budget Lines Founders Miss

Development quotes usually cover the visible build work. Pilot readiness depends on several other cost categories.

Privacy and legal review: Provincial interpretation matters. The answer can change based on whether your product serves a clinic, hospital, insurer, employer, or patient directly.

Security implementation: Role-based access, encryption, audit logs, session controls, and incident response preparation take real engineering time.

Interoperability testing: Interface work is rarely finished when the API connection is complete. Real sites expose field mismatches, workflow gaps, and local configuration issues.

Canadian cloud hosting and operations: Data residency expectations can affect vendor selection, infrastructure cost, and procurement approval.

Pilot operations: Training sessions, support coverage, issue triage, and user follow-up need named owners and a budget.

Clinical change management: A strong site lead often matters as much as the product itself. If nobody owns adoption inside the clinic, usage stalls fast.

One more line item deserves attention. Rework. Teams that skip early compliance and workflow validation often pay for the same feature twice.

A Practical Rollout Sequence

A rollout plan should reduce approval risk and learning risk at the same time.

Start with one province. A first pilot usually works better when the legal and operational assumptions are tied to one provincial context.

Choose one workflow that already hurts. Appointment intake, referral coordination, form completion, and discharge follow-up are common examples because the value can be observed quickly.

Pick one pilot environment with a real sponsor. A friendly executive is helpful, but an operational owner who can drive day-to-day use is better.

Keep integrations narrow. Read-only access or one controlled data exchange is often enough for an MVP if it supports the target workflow.

Set scale and stop criteria before launch. Define what adoption, completion, time saved, or error reduction must look like to justify another phase.

Write down key decisions. Privacy assumptions, access rules, data handling choices, and workflow exceptions should be documented while the team still remembers why they were made.

This sequence sounds conservative because it is. In Canadian healthtech, a narrow pilot with clear evidence usually gets farther than an ambitious release that creates extra review work for IT, privacy, and clinical leadership.

The Checklist That Matters at Go-Live

Before launch, confirm these points:

The product solves one clearly defined clinical or operational problem.

The province-specific privacy model has been reviewed.

Data storage, access, and retention rules are documented.

User roles and audit requirements are built into the product, not tracked manually.

The pilot site has named clinical and operational owners.

Training materials and support paths are ready.

Success metrics are agreed upon before the first user logs in.

The team knows what will trigger iteration, expansion, or shutdown.

That last point gets missed often. A pilot without decision criteria tends to drift, especially when feedback is mixed.

Final Read on Cost and Rollout

Healthtech MVP planning in Canada is not a race to release the most features for the lowest quote. It is a process of reducing clinical, privacy, and adoption risk early enough that the first pilot can survive real scrutiny.

That changes how smart teams spend money. They spend less on speculative functionality and more on workflow fit, traceability, controlled integrations, and the compliance decisions that keep expansion possible. That is usually the difference between a pilot that turns into procurement and one that dies as an interesting demo.

If you're planning a Canadian healthtech product and need a team that understands secure delivery, compliance-aware architecture, and practical MVP scoping, Cleffex Digital Ltd can help you move from idea to pilot with a disciplined build approach.