You've had the idea. You can explain the problem in one breath. Maybe a broker wants a simpler quoting workflow, a clinic needs a compliant patient intake tool, or a dealership wants better lead follow-up without another bloated system. Then the hard part hits. What do you build first, how much should you spend, and how do you avoid paying for the wrong product twice?

That's where MVP development for startups stops being startup jargon and becomes a practical decision tool. In Canada, that decision sits inside a very specific environment. You're balancing budget in CAD, privacy obligations under PIPEDA, possible sector rules in insurance or healthcare, and pressure to prove traction before the market loses interest.

Most founders don't need more theory. They need a build path that cuts risk without cutting the parts that matter.

Your Blueprint for MVP Development in Canada

A founder in Calgary has a clear pain point, two prospective customers, and a product vision that already spans web, mobile, reporting, permissions, and AI. A month later, the budget is under pressure, and the essential question finally shows up. Which version of this product can a customer use, trust, and pay for without forcing the company into a six-month build?

That question matters more in Canada because early product decisions carry extra weight. Budget planning happens in CAD. Privacy obligations under PIPEDA can affect what you collect, where you store it, and who can access it. If you are building for healthcare, insurance, or finance, sector-specific rules can narrow your options even further. Founders who treat compliance and scope as “later” problems usually pay for rework.

A workable MVP is the first version with one complete user outcome and just enough infrastructure to test it properly. For a clinic, that might be compliant patient intake with audit trails. For a brokerage, it could be a quoting workflow that cuts manual follow-up. For a dealership group, it may be lead routing and status tracking before any advanced analytics are added.

Keep the standard simple. One user segment. One painful job. One clear result.

Cost discipline matters here, too. In the Canadian market, MVP budgets often vary widely based on product complexity, integrations, and compliance needs. A founder building a simple internal workflow tool will face a different budget than a team handling personal data across provinces. SR&ED can improve the economics of eligible technical work, but it does not rescue a bloated scope. It rewards credible development activity, not unclear product thinking.

Before any code starts, it helps to run a short pre-project software consulting process to pressure-test scope, risk, and delivery assumptions. That step is often cheaper than rebuilding the wrong foundation after launch.

Validate Your Idea Before You Build Anything

A Toronto founder can spend $40,000 to $80,000 on an MVP, only to learn three months later that buyers liked the pitch but would not change their process, share data, or pay for the outcome. That is a validation failure, not a development failure.

Early risk sits in demand, buying behaviour, and operational reality. Canadian startups feel that pressure quickly because budgets are tighter, sales cycles can be slower in regulated sectors, and products that touch personal information may need to account for PIPEDA from the start. If the problem is weak, compliant code still misses the mark.

Start With the Problem, Not the Interface

Validation works best when the audience is narrow and specific. “Canadian small businesses” is too broad to be useful. Independent clinics in Ontario, Calgary-based field service firms, or brokerages handling manual renewals give you something you can test properly.

Ask about behaviour, not opinions. Founders often hear “I'd use that” and treat it as evidence. It is not. Useful interviews show what people do today, where the process breaks, what delays cost them, and who controls the budget or compliance review.

Use prompts like these:

Current workaround: “How are you handling this today?”

Recent example: “Tell me about the last time this failed.”

Cost of delay: “What does this problem cost in time, revenue, or staff effort?”

Approval path: “Who needs to sign off before a new tool gets adopted?”

Switch trigger: “What would make you replace the current process?”

In Canadian B2B products, one answer matters more than founders expect. “We'd need IT, operations, and privacy review involved” changes the product path immediately. If personal data is involved, validation should test not only the demand, but also whether the buyer will trust your handling of consent, retention, and access controls.

Repeated pain matters more than early feature requests.

If you need a more disciplined way to pressure-test assumptions before development, this guide to pre-project software consulting is a practical model for reducing risk before code starts.

Use Low-Cost Validation Before Custom Development

Founders do not need a full product to test demand. A landing page, a concierge MVP, a manually delivered service, or a clickable prototype can all produce useful evidence. The point is to observe commitment.

For example, a Vancouver startup building software for private clinics might begin with a simple page offering faster patient intake for one clinic type, then run manual onboarding behind the scenes. If clinics book calls, share forms, and ask workflow questions, that is stronger evidence than positive comments on LinkedIn. If they hesitate once privacy or integration questions come up, that is also useful. It tells you where the actual objection sits.

A practical validation stack for a Canadian startup might look like this:

| Validation method | Best use | What to look for |

|---|---|---|

| Landing page | Test positioning and audience interest | Sign-ups, demo requests, waitlist intent |

| Concierge MVP | Test service value before automation | Repeat usage, willingness to pay, delivery friction |

| Clickable prototype in Figma | Test flow clarity and comprehension | Confusion points, drop-off, and task completion |

| Direct outreach | Test urgency with a defined segment | Reply quality, meeting conversion, pilot interest |

Keep it narrow. One segment. One painful job. One promised result.

What Good Validation Looks Like

Strong validation has some cost attached to it. A prospect books a time with the team. They agree to a pilot. They introduce the operations lead. They share internal requirements. They ask about pricing, procurement, or data handling. Those signals carry weight because they require effort and some level of internal commitment.

Weak validation looks different. Friendly praise. Broad interest without a next step. Requests for a long list of features before any trial. Advisors are saying the market is “big” without naming a buyer, a budget owner, or a clear trigger to switch.

I advise founders to look for proof in three areas:

Behaviour: Will people take a concrete next step?

Economics: Is the pain expensive enough to justify a purchase?

Constraints: Can the product survive the buyer's security, privacy, and process requirements?

That last point matters in Canada. A startup serving healthcare, fintech, HR, or any workflow involving personal information cannot leave trust questions until after launch. PIPEDA concerns do not need a full legal programme at the validation stage, but they do need to show up in customer conversations early. The same is true for technical uncertainty. If your concept depends on novel engineering, SR&ED may offset part of the eligible development work later, but tax incentives do not validate demand.

When the evidence is weak, do not add more features to compensate. Refine the segment, sharpen the problem statement, or change the workflow you are testing. Founders save real money when they kill a weak assumption before the build starts.

Define a Lean and Powerful MVP Scope

A founder gets through customer discovery with encouraging signals, then loses three months building the wrong version of the product. I see that pattern often. The problem is usually not weak engineering. It has a weak scope.

A good MVP scope protects learning. It gives users one clear path from problem to outcome, and it gives the team a build small enough to ship without draining the budget.

Start With the Smallest Complete Workflow

Founders often confuse an MVP with a trimmed feature list. The scope should be defined around a usable workflow instead. If the first user cannot complete the main job in one short flow, the product is still too broad.

For a booking product, that usually means four parts:

Customer entry point: A landing page or booking form

Core action: Choose a service, pick a time, submit

Confirmation: Email or on-screen confirmation

Operator view: A simple admin screen to manage bookings

That is enough to test demand and delivery. Loyalty points, referral logic, advanced reporting, and native mobile apps can wait.

This matters even more in Canada, where early budgets are tight, and burn is often measured in a few months, not a few funding rounds. A founder in Toronto or Vancouver can spend CAD 25,000 to CAD 60,000 very quickly if the team starts building edge cases before proving the main transaction works.

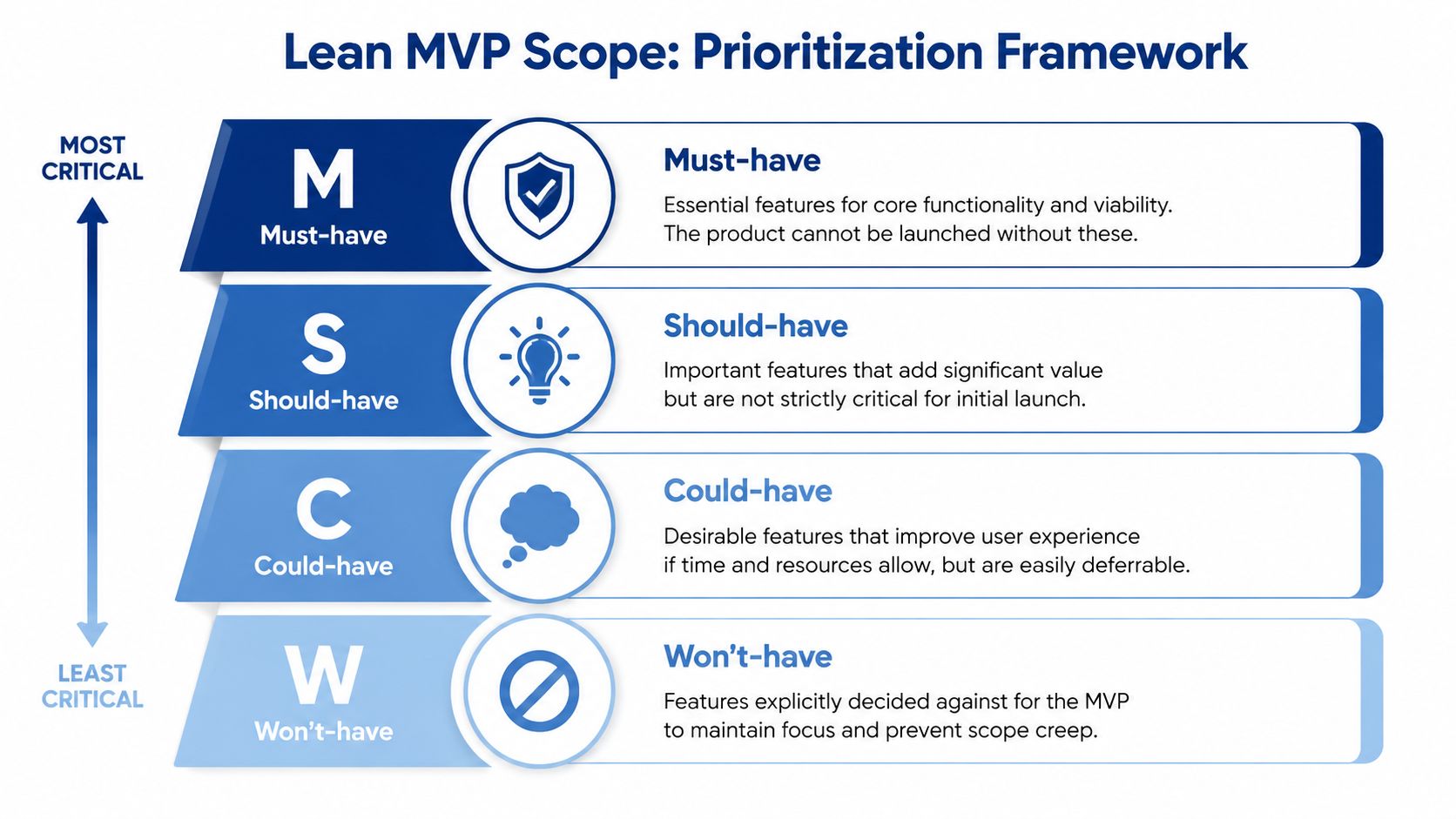

Use a Prioritisation Method That Forces Trade-Offs

MoSCoW works well because it creates clear categories:

Must-have: The product fails without it

Should-have: Useful, but the MVP can launch without it

Could-have: Nice to include if time and budget allow

Won't have: Excluded from this release on purpose

RICE is useful after that. It helps compare features that all sound reasonable in founder conversations but do not deserve equal effort. If a feature reaches few users, has an unclear impact, or adds major engineering cost, it should drop down the list.

I usually tell founders to cut the first draft by at least a third. The first scope draft reflects enthusiasm. The second draft reflects the strategy.

Build for the Decision You Need To Make

A lean MVP should answer a specific business question. Will merchants install the Shopify app? Will the clinic staff send the intake form? Will a finance team trust the workflow enough to try it with real data?

That framing changes what belongs in version one.

Take an AI-powered Shopify app for smaller Canadian merchants. The original feature list often includes:

AI recommendations

inventory alerts

personalised homepage blocks

customer segmentation

email automation

loyalty integration

analytics dashboard

multi-store support

role-based permissions

theme editor tools

A tighter MVP keeps:

Store connection

One AI-driven personalisation block

Basic reporting on whether that block improves clicks or sales

Now the founder can answer a real question. Does a merchant install it, keep it active, and see enough value to continue?

Scope Compliance Work at the Right Level

Canadian startups can ship lean without being careless. If the product handles personal information, scope privacy requirements into the MVP at the level the workflow needs. For many startups, that means collecting only necessary data, setting clear consent language, limiting access, and documenting where data is stored so PIPEDA questions do not derail early sales.

That does not mean building an enterprise compliance programme before launch. It means avoiding expensive rework later.

The same logic applies to government incentives. SR&ED can offset eligible technical work, but it should not become an excuse to overbuild. Founders sometimes rationalise extra complexity because part of the engineering cost may be recoverable. The better move is to keep the MVP narrow, document technical uncertainty properly, and claim eligible work if it qualifies.

Write User Stories That Expose Vague Thinking

Good user stories make fluff obvious. “As a clinic administrator, I want to send patients a secure intake link so I can reduce manual paperwork before appointments” This gives the team something concrete to design and estimate. “As an admin, I want full workflow control” hides too many assumptions.

A short backlog might look like this:

| Priority | User story | Keep for MVP |

|---|---|---|

| Must-have | As a user, I can sign up securely | Yes |

| Must-have | As a user, I can complete the main task | Yes |

| Should have | As an admin, I can export reports | Later |

| Could have | As a user, I can customise my profile | Later |

| Won't have | As a manager, I can build advanced automations | Not in MVP |

That level of clarity also helps with delivery planning, because scope, architecture, and stack choices are tied together. Founders who need help making those trade-offs should review this step-by-step guide to selecting the right tech stack before the build starts.

A strong MVP scope feels slightly uncomfortable. That is normal. It means the team is protecting time, cash, and learning speed instead of building every feature that sounded good in discovery.

Assemble Your Team and Tech Stack

A founder in Toronto hires a freelancer to build a fintech MVP, picks tools based on speed alone, and pushes compliance questions to later. Six weeks in, the product works well enough for a demo, but the team has no clear audit trail, no defined data handling rules, and no one accountable for architecture decisions. The rewrite starts before the launch does.

That pattern is common because team selection and stack selection are tied to the same business risk. The people building the product shape how quickly you ship, how much rework you absorb, and whether privacy obligations are handled properly from day one.

For Canadian startups, those choices carry extra weight. PIPEDA, customer expectations around data handling, and procurement questions from enterprise buyers can surface much earlier than founders expect. If the MVP touches health, finance, insurance, HR, or any workflow with personal information, privacy and governance belong in the build plan, not in a post-launch clean-up list.

Build With Compliance in Scope From the Start

Speed still matters. Rebuilding avoidable mistakes is slower.

Founders do not need a heavy governance process for an MVP. They do need a few baseline controls in place before development gets too far:

Data residency awareness: Know which vendors store or process customer data, and in which jurisdictions.

Access control: Set role-based permissions early if different users should see different records or actions.

Auditability: Log meaningful events so the team can trace changes, approvals, and sensitive actions.

Encryption and authentication: Treat secure sign-in, password handling, and encrypted data transfer as part of the product, not add-ons.

This matters in practical terms. A Saskatchewan health startup, a Montréal insurtech company, and a Vancouver B2B SaaS product will face different buyer questions, but all three can lose time if they cannot answer basic security and privacy concerns during pilot conversations.

Choose a Team Model That Matches Your Operating Reality

The right delivery model depends on product complexity, founder experience, and how much day-to-day coordination capacity exists inside the company.

| Model | Best For | Pros | Cons | Typical Cost (CAD) |

|---|---|---|---|---|

| In-house team | Technical founders building a long-term product capability | Deep product ownership, direct control, stronger internal context over time | Slow hiring, higher fixed cost, more management overhead at an early stage | Usually the highest commitment and least flexible for an MVP |

| Agency partner | Non-technical founders, regulated products, teams that need speed and structure | Cross-functional delivery, faster setup, stronger product and QA process, clearer accountability | Requires careful scoping and vendor selection | Commonly falls within mid-five-figure MVP budgets in Canada, depending on scope and compliance needs |

| Freelancers | Narrow builds with clear specifications and active founder oversight | Flexible resourcing, useful for design, prototypes, or isolated tasks | Coordination risk, uneven delivery quality, and no shared product process by default | Highly variable |

A freelancer-only approach can work. It usually works best when the founder already has product and technical leadership in place. Without that, someone still has to write clear requirements, review architecture decisions, manage QA, and decide what gets cut when time runs short.

Agency support often makes sense for Canadian founders who need a working product, a documented process, and enough discipline to keep scope under control. That is especially true if the roadmap includes enterprise sales, investor diligence, or an SR&ED claim later. Clean technical documentation and a traceable development process can help with both.

Pick Technology for the Next 12 Months

Early stack decisions should match the next stage of the business, not the version founders hope to have after a large round.

Use simple tools when the product risk is mostly about demand. Use custom development when the product risk is tied to workflow complexity, integrations, security, or ownership. Use cross-platform mobile frameworks when mobile matters now, and the budget does not support separate native builds.

A few practical rules help:

No-code or low-code fits simple internal workflows, concierge-style services, and early validation with limited automation.

Custom web development fits products that need bespoke logic, customer-specific permissions, integrations, or tighter security controls.

Cross-platform mobile development fits mobile-first products that need one codebase to keep early costs in check.

Cloud choices should be reviewed against customer expectations, data handling requirements, and future procurement questions.

Canadian founders sometimes overpay for sophistication they do not need, then underinvest in the areas that matter. Trendy frameworks do not fix poor domain modelling. Cheap tooling does not stay cheap if the team cannot support secure authentication, access control, reporting, or integrations six months later.

If you need a practical framework for evaluating options, review this guide to selecting the right tech stack for your product stage and business goals.

A fast MVP that forces a full rewrite after first traction is rarely a bargain. It is a delayed invoice.

One final point. Compare delivery partners on process, not just price. Ask who owns discovery, how backlog trade-offs are made, what QA looks like, how security issues are surfaced, and what documentation you keep for future hiring or SR&ED support. A provider like Cleffex Digital Ltd can be a fit for founders who need structured discovery, MVP engineering, and agile delivery under one roof, but the standard is the same either way. The team should help you make disciplined trade-offs, not just write code quickly.

Master the Build-Measure-Learn Feedback Loop

A strong MVP process doesn't aim for perfection. It aims for useful learning in short cycles. That's the primary engine behind product progress.

Founders often imagine development as a straight line. Requirements go in, software comes out, launch happens. In practice, healthy MVP delivery looks more like a loop. Build a thin slice. Put it in front of users. Watch what they do. Adjust. Repeat.

What the Loop Looks Like in Real Work

The rhythm is usually simple. Product and design define the next smallest testable increment. Developers build it in a sprint. QA checks the path that matters most. Early users try it. The team reviews what changed in user behaviour, then decides the next move.

Canadian startups that rigorously follow the Build-Measure-Learn loop see 25% higher user retention rates. A common process involves 2-week Scrum sprints, prototyping, and A/B testing with 100 to 500 early users, leading 35% of validated MVPs to secure Series A funding in under 18 months.

That matters because it reframes what “good development” means. It's not the team with the longest feature list. It's the team that learns fastest without breaking trust.

Build Less, Observe More

Before committing a feature to production code, use lighter artefacts where possible:

Wireframes in Figma: Good for early layout and flow reviews

Clickable prototypes: Good for testing user understanding before engineering starts

Sprint demos: Good for exposing misunderstandings while changes are still cheap

A/B tests: Good when you have enough user traffic to compare options meaningfully

The first release of a workflow doesn't need every edge case solved. It needs the main path to be clear and functional.

If your sprint review focuses only on what shipped, you're missing half the value. Review what the team learned, too.

If you're building with an agile product team, Cleffex's article on agile software development benefits is a practical companion for founders who want to understand why short iterations outperform long silent build phases.

Measure the Right Things

A surprising number of teams launch and then stare at vanity metrics. Page views, raw sign-ups, and social buzz can be useful context, but they don't tell you whether the product solved anything.

For an MVP, better questions are:

Are users reaching the core action?

Are they returning after the first experience?

Are they dropping off at the same step?

Are support requests exposing the same confusion every week?

Are users asking for deeper use, not just more features?

A simple measurement view for early teams:

| Stage | What to measure | Why it matters |

|---|---|---|

| Activation | Did users complete the core action? | Confirms first-use clarity |

| Retention | Did they come back? | Signals ongoing value |

| Feedback quality | What complaints or requests repeat? | Reveals friction and unmet needs |

| Conversion intent | Did they ask to expand usage or pay? | Indicates commercial potential |

How To Keep the Loop Healthy

Teams break the loop when they do one of three things. They build too much before feedback. They collect feedback but don't prioritise it. Or they react to every comment without checking whether it reflects the target customer.

A better operating rhythm looks like this:

Choose one hypothesis per sprint. Example: users will complete the intake faster with a simplified form.

Ship the smallest version that tests it.

Collect both behavioural and qualitative feedback.

Decide whether to keep, change, or remove it.

MVP development for startups becomes practical. You're not paying for software alone. You're paying for a sequence of decisions. The loop improves those decisions.

Launch Your MVP and Plan What Comes Next

Launch day feels like a finish line because it's visible. The hard work before it has usually been private. But for an MVP, launch is the moment the market finally gets a vote.

That's why the best founders treat launch as the start of a more honest phase. Real users don't care about your roadmap logic. They care whether the product solves the job they hired it to do.

Your Launch Checklist Should Stay Narrow

A useful MVP launch checklist is short and operational:

Core journey tested: The main user path works consistently

Analytics in place: You can see activation, drop-off, and repeat usage

Support channel ready: Users know where to report issues

Feedback capture planned: Interviews, forms, or direct outreach are scheduled

Pilot cohort defined: You know who the first users are and why they were chosen

For a broader release checklist, essential product launch steps on Saaspa.ge can help founders catch execution gaps that don't show up in product discussions.

Use the First Weeks To Decide, Not To Celebrate

The strongest post-launch habit is a structured review cadence. Weekly is usually enough at the start. Look at user behaviour, support tickets, interview notes, and internal observations together. Don't separate “product feedback” from “customer feedback.” They're usually describing the same truth from different angles.

For some Canadian startups, especially in healthcare and insurance, AI-enhanced MVPs have created a meaningful early advantage. A Startup Canada survey found that 65% of AI-powered MVP launches in Canadian healthcare and insurance achieved 50% higher user engagement metrics within 8 weeks of launch, with complex MVPs yielding a 2.5x ROI through 25% faster iterations, according to Intigate Technologies' analysis of MVP costs and outcomes. That doesn't mean every MVP needs AI. It means targeted automation or personalisation can materially improve early learning when it serves a real user problem.

Early launch data should answer one question before any other. Are users getting the value you promised?

Build a Now, Next, Later Roadmap

After launch, your roadmap should stop being a wishlist and start becoming evidence-based.

A simple structure works:

| Roadmap bucket | What belongs there |

|---|---|

| Now | Bugs, UX blockers, compliance fixes, activation friction |

| Next | Repeated requests from target users, proven workflow improvements |

| Later | Expansion features, adjacent use cases, and lower-priority ideas |

This format keeps stakeholders honest. It also protects the team from reacting emotionally to isolated requests.

You don't need certainty after launch. You need discipline. Sometimes the right move is to persevere. Sometimes it's too narrow further. Sometimes the market shows you a better use case than the one you started with. The founders who win aren't the ones who guessed right on day one. They're the ones who learn quickly without losing focus.

If you're planning an MVP and need a team that can handle discovery, scoping, compliance-aware delivery, and iterative product development, Cleffex Digital Ltd is one option to evaluate. They work with startups, healthcare, insurance, automotive, and growth-stage businesses that need practical software delivery rather than vague product advice.