The market signal is hard to ignore. The global AI in insurance market is projected to grow at a 35.7% CAGR from 2026 to 2034, rising from USD 13.45 billion to USD 154.39 billion, while North America holds nearly 40% of market share according to Fortune Business Insights on AI in insurance market growth.

For Canadian insurers, that changes the conversation. AI is no longer a lab exercise or a side innovation project. It’s becoming part of how insurers price risk, process claims, detect fraud, serve policyholders, and satisfy regulators who increasingly expect stronger governance around data, bias, and auditability.

The part global guides often miss is this. In Canada, the value of ai solutions in insurance depends less on whether a model works in theory and more on whether it can operate inside PIPEDA, align with OSFI expectations, and fit the data reality of a small or medium-sized insurer that doesn’t have a pristine enterprise platform.

Why AI in Insurance Is No Longer Optional

Many executives still frame AI as a timing question. Should we start now, or wait until the tools mature further?

That’s the wrong question. The tools are already mature enough in the areas that matter most to insurers: underwriting support, claims workflow automation, fraud detection, document handling, and customer service augmentation. The issue now is competitive readiness.

In Canada, insurers are operating in a difficult mix of pressures. Customers want faster answers and cleaner digital journeys. Claims teams are dealing with high-volume events and rising documentation loads. Underwriters need better decision support without creating black-box governance risk. At the same time, firms have to manage regional variability, privacy obligations, and legacy core systems that weren’t built for modern data flows.

Where the urgency comes from

The fastest-moving insurers aren’t adopting AI because it sounds cutting-edge. They’re adopting it because manual operations don’t scale well under current conditions.

Three business realities keep pushing adoption forward:

- Claims volume and complexity: Adjusters and claims teams need help triaging routine files, extracting data from documents, and flagging exceptions early.

- Pricing pressure: Underwriters need better use of behavioural, historical, and third-party data to support more accurate risk selection.

- Service expectations: Policyholders increasingly expect fast, coherent interactions across phone, chat, email, and self-serve channels.

Practical rule: If a process already depends on repeatable decisions, large document volumes, or image review, AI is usually worth evaluating.

Why Canadian insurers need a different playbook

A Canadian insurer can’t just copy a US or UK implementation pattern. Consent requirements, privacy handling, explainability expectations, and supervisory scrutiny change the design choices.

Small and medium-sized insurers feel this most sharply. They often have less technical overhead to untangle, which helps. But they also have tighter budgets, leaner compliance capacity, and fewer internal specialists to validate models, monitor drift, or redesign workflows.

That’s why the strongest AI strategy usually starts with business friction, not with a platform purchase. Pick the process where delay, inconsistency, or cost is already visible. Then build a governed path from data intake to model output to human review.

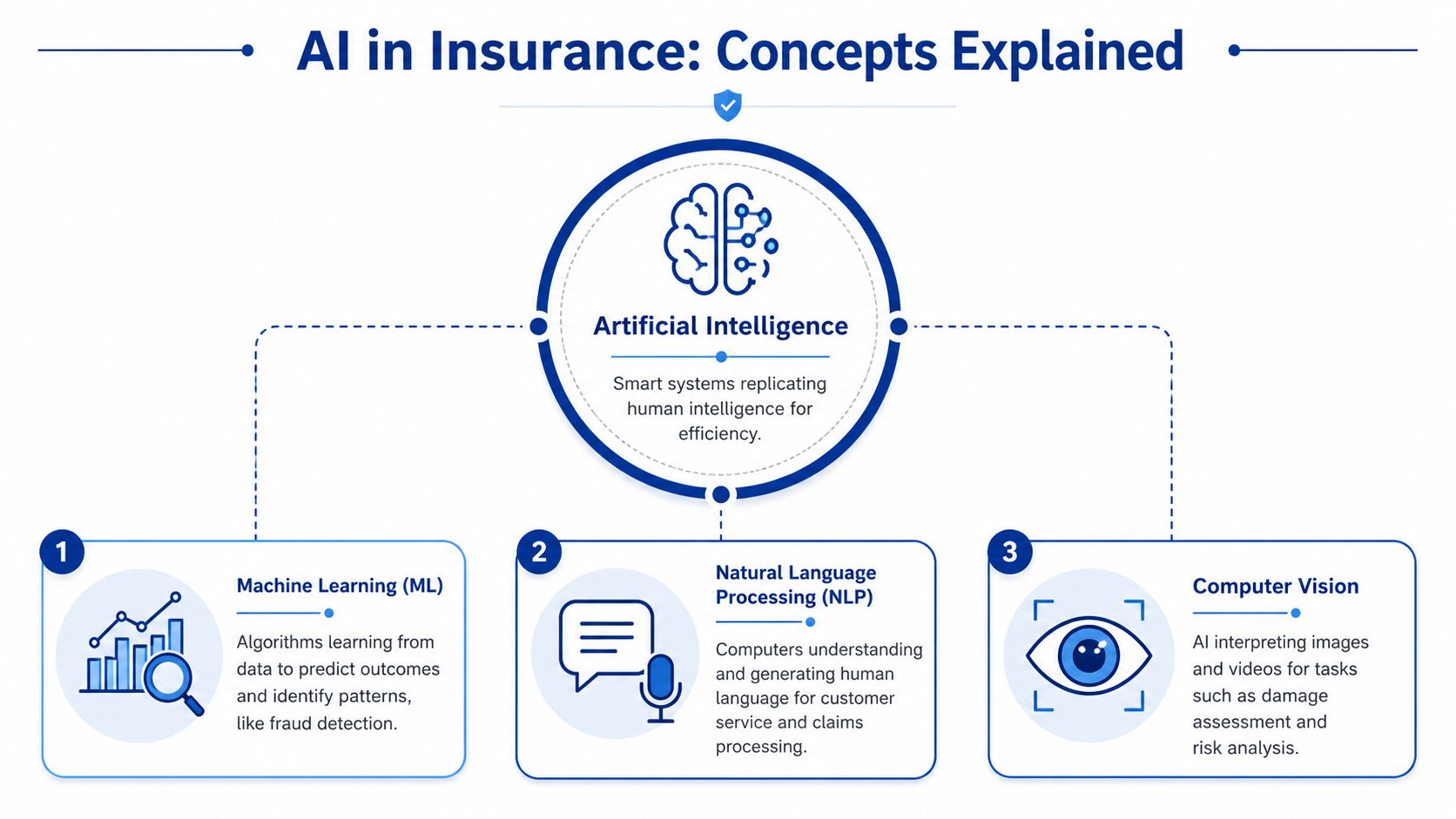

Understanding What AI Really Means for Insurance

Insurance leaders don’t need a computer science lecture. They need a clear view of what each AI category does inside day-to-day operations.

At the broadest level, artificial intelligence means software performing tasks that normally require human judgement, pattern recognition, or language handling. In insurance, that might mean sorting incoming claims, spotting unusual behaviour, summarising policy documents, or routing a file to the right team.

Machine learning for prediction

Machine learning is the part most insurers mean when they talk about AI for core decisions. Think of it as a very fast analyst that learns from past claims, underwriting outcomes, fraud indicators, and customer interactions to predict what’s likely to happen next.

In practice, ML is useful when the question is predictive:

- Which claims should be reviewed first

- Which submissions are likely to bind cleanly

- Which policies show high fraud risk

- Which customers may need retention attention

Within this, much of the operational value sits. It doesn’t replace insurance judgement. It gives underwriters, adjusters, and operations teams a more informed starting point.

Generative AI for language and workflow support

Generative AI works differently. Instead of predicting risk or fraud scores, it generates or restructures content. That makes it useful for customer-facing and internal language tasks such as drafting claim summaries, preparing agent notes, producing policy explanations, and handling routine service conversations.

Done well, it reduces admin drag. Done poorly, it creates compliance and accuracy risk because generated language can sound confident even when it’s wrong.

That’s why insurers should treat generative AI as a supervised assistant, not an autonomous decision-maker for regulated actions.

NLP and computer vision in practical terms

Two capabilities matter a lot in insurance operations.

- Natural language processing: This helps systems read emails, forms, adjuster notes, and policy wording.

- Computer vision: This helps systems interpret photos, scanned documents, and damage imagery.

A good example is intake. Many insurers still receive information through PDFs, email attachments, handwritten forms, and broker submissions that arrive in inconsistent formats. Tools for data extraction for insurance carriers are useful in that environment because they turn messy incoming documents into structured fields that downstream workflows can use.

The fastest AI wins in insurance often come from making unstructured information usable, not from building the most advanced model.

For executives, the practical distinction is simple. Use traditional AI and ML where you need classification, prediction, or anomaly detection. Use generative AI where staff spend too much time reading, rewriting, summarising, or replying.

Core AI Applications Transforming Insurance Operations

The most effective ai solutions in insurance are tied to specific workflow bottlenecks. They don’t start as broad “transformation” programmes. They start where a team already knows the work is slow, repetitive, or inconsistent.

Underwriting that moves faster and flags exceptions better

Underwriting is often the cleanest place to create value because the process already relies on rules, historical patterns, and repeatable review steps. According to the NAIC overview of artificial intelligence in insurance, AI automation has improved Straight Through Processing by 25% to 40% for leading P&C insurers, and reduced underwriting cycle times from over a week to less than 24 hours.

That result matters because it changes the role of the underwriter. Instead of spending most of the day collecting, reformatting, and screening inputs, the team can spend more time on referrals, exceptions, and pricing judgement.

The best underwriting implementations usually do four things well:

- Pull data from multiple sources: telematics, public records, broker submissions, prior claims, and internal policy history

- Score submissions early: likely standard risks move quickly, unclear files get routed for review

- Explain outputs: users need to see why a model increased or downgraded risk

- Preserve override controls: underwriters still need authority and audit trails

Claims that don’t stall at intake

Claims teams often feel AI first through operational relief. Intake, triage, document extraction, image review, and status communication all lend themselves to automation.

A practical model is to automate the low-friction parts first. That can mean reading FNOL documents, extracting key fields, categorising claim type, checking for missing information, and routing the file. The next layer is image analysis and workflow orchestration.

For firms reviewing service channels, resources such as Eden's AI receptionist service can also be useful context for thinking about how AI supports inbound policyholder communication before a claim even reaches an adjuster.

If your claims team is still rekeying fields from PDFs into a core system, the first problem isn’t model sophistication. It’s workflow design.

For a deeper look at how these components fit into a broader operating model, Cleffex has outlined a practical view of AI integration in insurance transformation.

Fraud detection that works in real time

Fraud programmes usually produce value when they move from static rules to layered detection. Traditional rules still matter, but they miss context and create noise. AI adds pattern recognition across claim histories, timing anomalies, image inconsistencies, and behavioural signals.

In practice, insurers get better outcomes when fraud models support investigators rather than trying to replace them outright. A good system scores suspicious activity, surfaces reasons, and helps SIU teams prioritise what deserves attention first.

Customer service that supports staff, not just call deflection

Customer service AI works best when it shortens routine interactions and gives staff better context. The useful applications are not limited to chatbots. They include agent assist, conversation summarisation, policy explanation, contact centre triage, and guided next-best action.

That’s important for smaller insurers in particular. A lean service team can improve response quality without trying to build a large digital operations unit from scratch.

AI application impact for different insurer sizes

| AI Application | Focus for SMBs (<$10M Revenue) | Focus for Large Enterprises (>$1B Revenue) |

|---|---|---|

| Underwriting | Faster intake, standard-risk automation, referral routing | Enterprise-wide decision support, portfolio optimisation, model governance |

| Claims processing | Document extraction, triage, status updates, basic image review | End-to-end orchestration, image intelligence, core platform integration |

| Fraud detection | Priority scoring for suspicious files, better investigator focus | Real-time cross-channel detection, advanced anomaly monitoring |

| Customer service | AI-assisted email, chat, call summarisation, self-serve support | Omnichannel service architecture, agent assist, personalisation at scale |

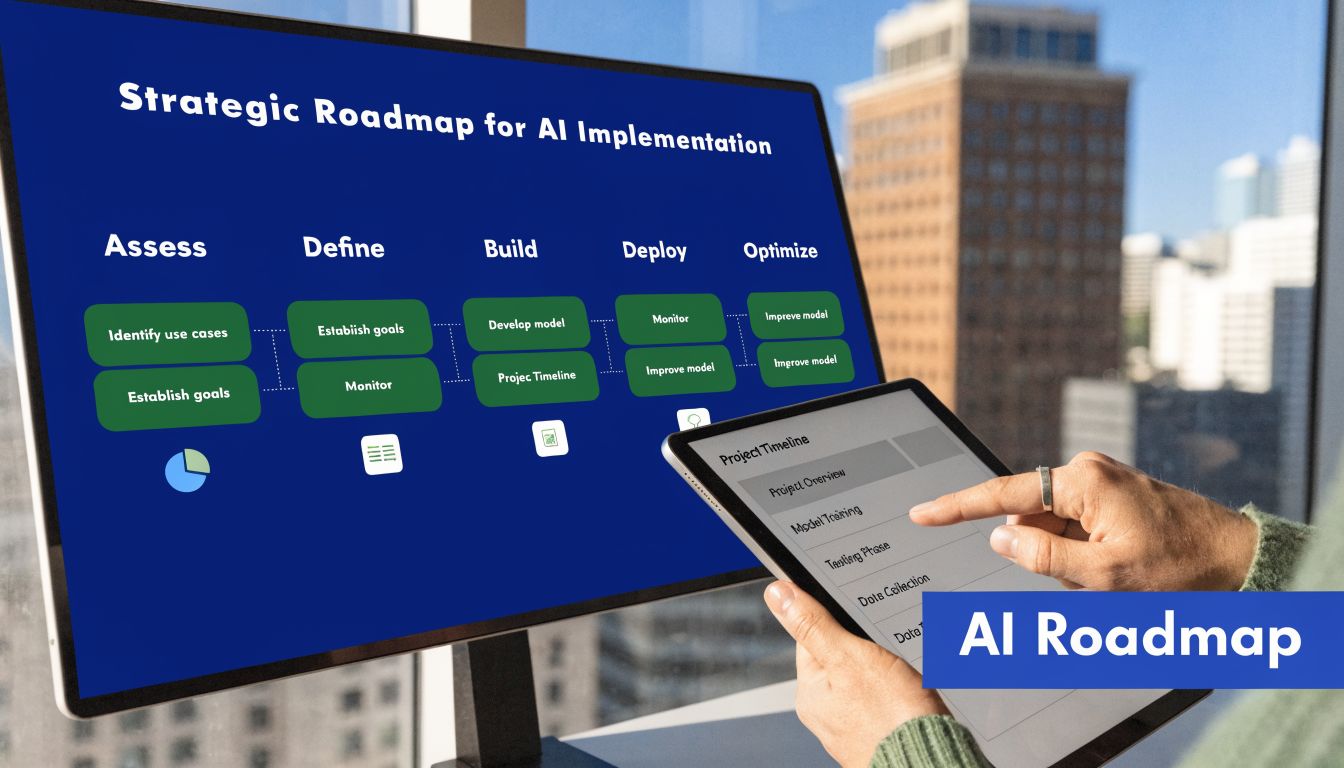

Building Your AI Implementation Roadmap

Most insurers don’t fail because the model is weak. They fail because the implementation path is vague, ownership is fragmented, or the pilot never gets connected to real operations.

Start with one business problem

A strong roadmap begins with a painful, narrow use case. Claims triage, underwriting intake, document extraction, and fraud review are common starting points because teams already understand the friction.

Avoid broad mandates like “use AI in claims”. Define the problem in operational terms instead:

- Too much manual intake work

- Slow review of routine submissions

- Inconsistent fraud escalation

- High service load for repetitive questions

That decision shapes everything that follows, including data requirements, user adoption, and governance controls.

Get the data layer ready before model work

Insurance data is usually spread across core systems, PDFs, broker emails, scanned attachments, call notes, and image stores. If those inputs stay fragmented, even a good model underperforms.

The practical work here is not glamorous, but it’s where the project earns its future ROI:

- Map source systems: know where policy, claims, billing, imaging, and communication data sits

- Standardise core fields: customer identifiers, claim type, loss cause, dates, and status labels need consistent definitions

- Separate regulated data carefully: health, telematics, and sensitive personal information need tighter access and consent controls

- Create reviewable outputs: business users should be able to trace what the model saw and what it produced

Pilot with a high-signal use case

Pilot scope should be narrow enough to govern and broad enough to prove value. Fraud detection is often a good candidate because the business outcome is clear and the workflow supports investigator oversight.

The technology has become more practical here. According to Snowflake’s overview of AI in insurance, AI-powered fraud detection using computer vision can achieve 92% precision and reduce false positives by 15% compared with older rule-based systems.

That doesn’t mean every insurer should begin with computer vision. It means the pilot should be selected based on available data, process maturity, and user readiness.

A pilot should prove two things at once. The model can help, and the organisation can absorb the change.

Scale through integration and operating discipline

Once a pilot works, insurers usually hit the hard part. Moving from a controlled test to a production workflow means integrating with policy admin, claims systems, document stores, contact centre tools, and reporting layers.

That stage needs a clear operating model:

- Who owns model monitoring

- Who approves business-rule changes

- Who reviews exceptions

- Who handles privacy and compliance checkpoints

- Who retrains or retires models when performance shifts

For teams evaluating build-versus-buy decisions, Cleffex Digital ltd is one example of a development partner that works on custom insurance AI implementation, and their perspective on insurance AI software development is useful if you’re assessing how much should be productised versus bespoke.

Cloud can help with speed and scalability, but it doesn’t remove governance work. On-prem can help with certain control requirements, but it can slow iteration. The right choice depends on data sensitivity, integration constraints, and how much internal engineering capacity the insurer has.

Navigating Compliance and Ethical AI in Canada

Many insurance AI programmes become fragile. A model may perform well in testing and still create risk if consent is unclear, data lineage is weak, or outcomes can’t be explained to compliance teams, customers, or regulators.

Bias is a governance issue, not just a model issue

Canadian insurers need to treat fairness as an operating control. A 2024 OSFI report noted that 65% of Canadian financial institutions identified AI-related bias as a top governance risk, yet only 28% had implemented effective mitigation frameworks, as discussed in this review of AI underwriting and governance risk.

That gap matters because underwriting and claims models can absorb historical bias from legacy decisions, incomplete data, proxy variables, or poor segmentation choices. The risk isn’t only reputational. It can affect customer outcomes, complaint volumes, internal audit findings, and regulator confidence.

A practical bias mitigation approach includes:

- Training data review: check whether historical data reflects skewed approval, pricing, or denial patterns

- Feature governance: remove or constrain variables that act as problematic proxies

- Outcome testing: compare outputs across relevant customer groups and distribution channels

- Escalation design: ensure edge cases route to human review instead of silent automation

PIPEDA changes what “usable data” means

In insurance, teams often assume that if data exists, it can be used for AI. Under Canadian privacy expectations, that assumption is dangerous.

PIPEDA affects how insurers collect, use, retain, and disclose personal information. That becomes especially important for telematics, health-related claims information, behavioural data, and third-party enrichment sources. If consent terms are vague or the use case extends beyond what was reasonably understood, the AI project may be technically sound and still operationally non-compliant.

This changes project design in practical ways:

- Consent language must match intended use

- Data minimisation matters

- Model inputs should be documented at field level

- Access controls need to reflect sensitivity

- Human review remains important for high-impact decisions

The safest Canadian AI programmes aren’t the ones with the most controls on paper. They’re the ones where product, legal, compliance, and operations agree on data use before deployment starts.

Explainability is now part of delivery quality

Executives sometimes hear “explainable AI” and treat it as a regulator’s wish list. It’s more immediate than that. If an underwriting or claims outcome can’t be explained internally, frontline staff won’t trust it, compliance teams won’t defend it, and customers won’t accept it when a decision is contested.

That’s why explainability should be designed into vendor selection, model monitoring, and workflow UX. Users need reason codes, confidence thresholds, override paths, and audit logs. Compliance teams need lineage, validation records, and policy mapping.

For a deeper operational checklist, this guide to AI for insurance compliance in Canada is a useful reference point.

How to Choose the Right AI Partner or Platform

Most insurers won’t build every component themselves. The question is how to choose a partner without locking the organisation into a tool that looks strong in a demo and weak in production.

What matters more than a polished demo

Insurance executives should look past generic AI claims and test whether the vendor understands the operating environment. A vendor that talks fluently about model accuracy but can’t discuss consent, auditability, exception routing, or policy admin integration probably isn’t ready for an insurance deployment.

Use these criteria to pressure-test the fit:

- Insurance process knowledge: Can the team speak concretely about underwriting, claims, fraud, broker flows, and service operations?

- Canadian compliance awareness: Do they understand PIPEDA, OSFI expectations, and the practical need for explainability and human oversight?

- Integration capability: Can their solution connect to core systems, document repositories, CRM platforms, and communication tools without forcing a full stack replacement?

- Support model: Will they help with pilot design, change management, and post-launch monitoring, or do they stop at implementation?

- Commercial clarity: Is pricing transparent enough to model cost at pilot stage and production scale?

Questions worth asking in procurement

A good procurement process for ai solutions in insurance should sound more like operational due diligence than software shopping.

Ask for:

- A sample workflow design, not just a feature list

- Evidence of explainability options for business users

- Details on data handling and retention

- A clear escalation model when the AI is uncertain

- A view of ongoing governance, including retraining, monitoring, and model retirement

Off-the-shelf versus custom build

Off-the-shelf platforms can speed up implementation when the use case is common and the insurer can work within the product’s assumptions. That often suits contact centre support, document extraction, or standard service automation.

Custom development makes more sense when the insurer has unusual underwriting logic, specific data handling constraints, legacy integration issues, or a need to embed AI thoroughly into proprietary workflows.

The strongest partners are usually the ones willing to say no to unnecessary complexity. If a business problem can be solved with structured automation and modest AI support, that’s often better than forcing a full machine learning initiative.

Measuring Success and Future-Proofing Your Strategy

AI programmes drift when success is measured too narrowly. A model can perform well in technical testing and still fail the business if adoption is weak, handoffs are messy, or compliance overhead cancels out the gain.

Measure business outcomes first

For insurers, the most useful scorecard usually combines operational, financial, and customer-facing indicators:

- Operational metrics: claims turnaround, underwriting cycle time, referral volume, rework rates

- Risk metrics: accuracy of escalation, fraud investigation yield, consistency of decisions

- Service metrics: response quality, contact centre handling support, customer satisfaction signals

- Governance metrics: override rates, exception patterns, audit readiness, policy adherence

Customer experience should stay on that list. According to IBM, early adopters of AI in insurance have seen 14% higher customer retention and 48% higher Net Promoter Scores when generative AI enhances customer-facing systems, as referenced by the NAIC survey on insurer AI adoption.

Build for adaptation, not permanence

The future-proofing mindset is straightforward. Don’t treat AI as a one-time rollout. Treat it as a managed capability that will need tuning as products, regulations, customer expectations, and data sources change.

That means keeping a few disciplines in place:

- Review models regularly: what worked at launch may drift as claims patterns and customer behaviour shift

- Keep humans in the loop: especially for high-impact decisions, appeals, and edge cases

- Design reusable components: document pipelines, audit logs, and orchestration layers should support more than one use case

- Expand only after adoption is real: one well-run production workflow is more valuable than several disconnected pilots

The insurers that get long-term value from AI don’t chase every new capability. They build a repeatable way to select, govern, and scale the right ones.

If your team is assessing where AI fits in underwriting, claims, fraud, or customer operations, Cleffex Digital ltd can support the practical side of the decision. That includes custom software development, workflow integration, and compliant AI implementation for Canadian insurers that need a realistic path from pilot to production.